|

Four years after the launch of GDPR and one year after Apple’s App Tracking Transparency release, marketers are still grappling with the reality of the privacy-first era as it turns the marketing “best practices” of the last decade on its head and exposes a level of uncertainty. In this on-demand webinar, BlueConic’s COO and President, Cory Munchbach, was joined by Forrester Analyst and guest speaker, Stephanie Liu, to debunk 4 myths of consumer data privacy that are holding marketers back. The presentation covers:

The post Debunking the 4 myths of consumer data privacy that are holding marketers back appeared first on Search Engine Land. via Search Engine Land https://searchengineland.com/debunking-the-4-myths-of-consumer-data-privacy-that-are-holding-marketers-back-387226

0 Comments

Natural language processing opened the door for semantic search on Google. SEOs need to understand the switch to entity-based search because this is the future of Google search. In this article, we’ll dive deep into natural language processing and how Google uses it to interpret search queries and content, entity mining, and more. What is natural language processing?Natural language processing, or NLP, makes it possible to understand the meaning of words, sentences and texts to generate information, knowledge or new text. It consists of natural language understanding (NLU) – which allows semantic interpretation of text and natural language – and natural language generation (NLG). NLP can be used for:

The following are the core components of NLP:

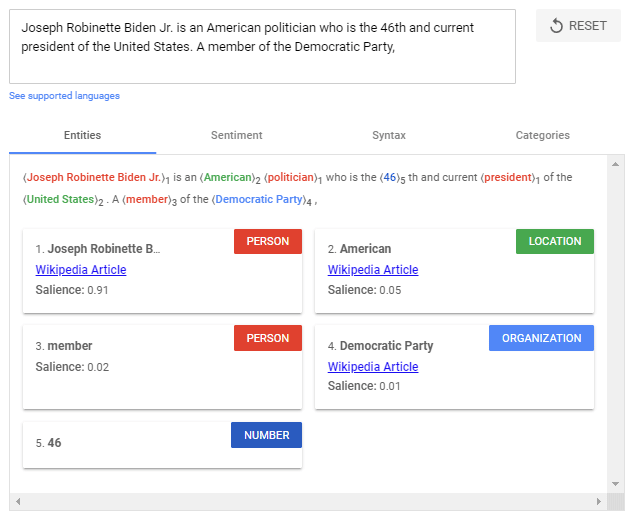

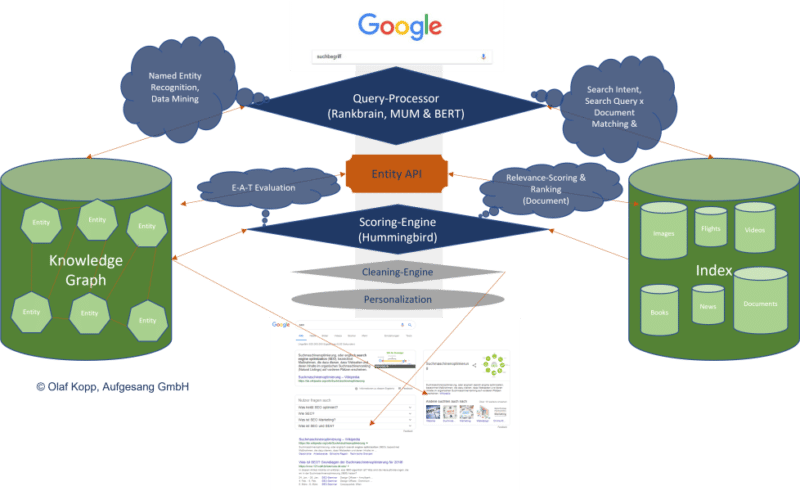

The use of NLP in searchFor years, Google has trained language models like BERT or MUM to interpret text, search queries, and even video and audio content. These models are fed via natural language processing. Google search mainly uses natural language processing in the following areas:

Google highlighted the importance of understanding natural language in search when they released the BERT update in October 2019.

BERT & MUM: NLP for interpreting search queries and documentsBERT is said to be the most critical advancement in Google search in several years after RankBrain. Based on NLP, the update was designed to improve search query interpretation and initially impacted 10% of all search queries. BERT plays a role not only in query interpretation but also in ranking and compiling featured snippets, as well as interpreting text questionnaires in documents.

The rollout of the MUM update was announced at Search On ’21. Also based on NLP, MUM is multilingual, answers complex search queries with multimodal data, and processes information from different media formats. In addition to text, MUM also understands images, video and audio files. MUM combines several technologies to make Google searches even more semantic and context-based to improve the user experience. With MUM, Google wants to answer complex search queries in different media formats to join the user along the customer journey. As used for BERT and MUM, NLP is an essential step to a better semantic understanding and a more user-centric search engine. Understanding search queries and content via entities marks the shift from “strings” to “things.” Google’s aim is to develop a semantic understanding of search queries and content. By identifying entities in search queries, the meaning and search intent becomes clearer. The individual words of a search term no longer stand alone but are considered in the context of the entire search query. The magic of interpreting search terms happens in query processing. The following steps are important here:

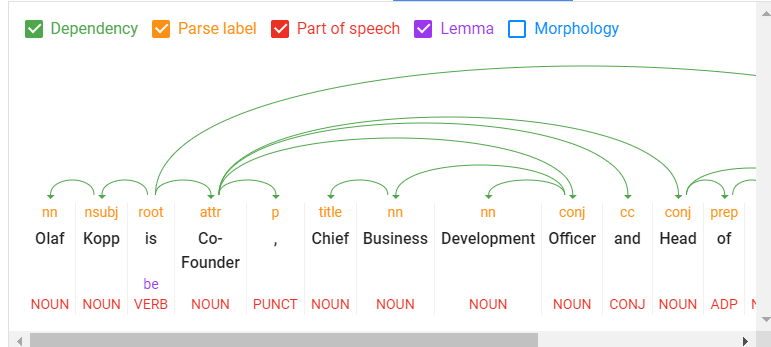

NLP is the most crucial methodology for entity miningNatural language processing will play the most important role for Google in identifying entities and their meanings, making it possible to extract knowledge from unstructured data. On this basis, relationships between entities and the Knowledge Graph can then be created. Speech tagging partially helps with this. Nouns are potential entities, and verbs often represent the relationship of the entities to each other. Adjectives describe the entity, and adverbs describe the relationship.

Google has so far only made minimal use of unstructured information to feed the Knowledge Graph. It can be assumed that:

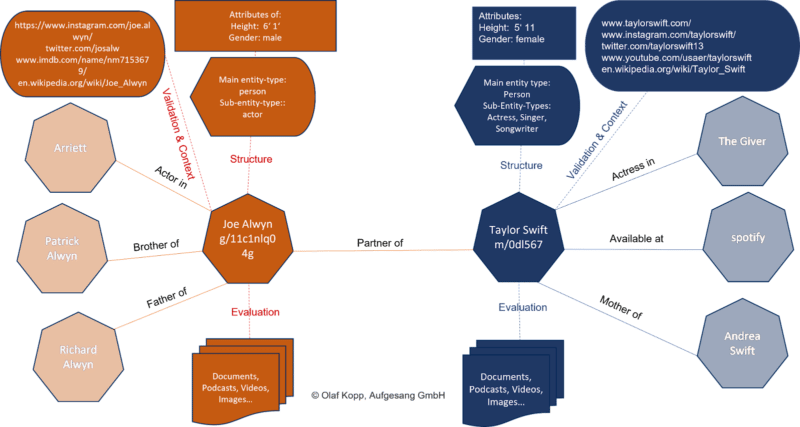

NLP plays a central role in feeding this knowledge repository. Google is already quite good in NLP but does not yet achieve satisfactory results in evaluating automatically extracted information regarding accuracy. Data mining for a knowledge database like the Knowledge Graph from unstructured data like websites is complex. In addition to the completeness of the information, correctness is essential. Nowadays, Google guarantees completeness at scale through NLP, but proving correctness and accuracy is difficult. This is probably why Google is still acting cautiously regarding the direct positioning of information on long-tail entities in the SERPs. Entity-based index vs. classic content-based indexThe introduction of the Hummingbird update paved the way for semantic search. It also brought the Knowledge Graph – and thus, entities – into focus. The Knowledge Graph is Google’s entity index. All attributes, documents and digital images such as profiles and domains are organized around the entity in an entity-based index.

The Knowledge Graph is currently used parallel to the classic Google Index for ranking. Suppose Google recognizes in the search query that it is about an entity recorded in the Knowledge Graph. In that case, the information in both indexes is accessed, with the entity being the focus and all information and documents related to the entity also taken into account. An interface or API is required between the classic Google Index and the Knowledge Graph, or another type of knowledge repository, to exchange information between the two indices. This entity-content interface is about finding out:

It could look like this:

We’re just starting to feel the impact of entity-based search in the SERPs as Google is slow to understand the meaning of individual entities. Entities are understood top-down by social relevance. The most relevant ones are recorded in Wikidata and Wikipedia, respectively. The big task will be to identify and verify long-tail entities. It is also unclear which criteria Google checks for including an entity in the Knowledge Graph. In a German Webmaster Hangout in January 2019, Google’s John Mueller said they were working on a more straightforward way to create entities for everyone.

NLP plays a vital role in scaling up this challenge. Examples from the diffbot demo show how well NLP can be used for entity mining and constructing a Knowledge Graph.

NLP in Google search is here to stayRankBrain was introduced to interpret search queries and terms via vector space analysis that had not previously been used in this way. BERT and MUM use natural language processing to interpret search queries and documents. In addition to the interpretation of search queries and content, MUM and BERT opened the door to allow a knowledge database such as the Knowledge Graph to grow at scale, thus advancing semantic search at Google. The developments in Google Search through the core updates are also closely related to MUM and BERT, and ultimately, NLP and semantic search. In the future, we will see more and more entity-based Google search results replacing classic phrase-based indexing and ranking. The post How Google uses NLP to better understand search queries, content appeared first on Search Engine Land. via Search Engine Land https://searchengineland.com/how-google-uses-nlp-to-better-understand-search-queries-content-387340

Learn which key technical SEO and website health metrics you should be including in your marketing KPIs — and how to demonstrate the impact of organic search and website health on your wider business goals. From website traffic and click-through rates to sales attribution models, SEO experts cover it all in this webinar. Register today for “Tracking Growth from Organic Search: SEO Metrics and KPIs Every Marketer Should Know” presented by Deepcrawl. The post Webinar: SEO metrics and KPIs every marketer should know appeared first on Search Engine Land. via Search Engine Land https://searchengineland.com/webinar-seo-metrics-and-kpis-every-marketer-should-know-387418 We’re expecting the Google helpful content update to launch this week. Does the announcement have you concerned? Aleyda Solis posted a poll on Twitter to ask SEOs. The final results:

I’m surprised that so many people think their own content is helpful. But we’re all biased to think our content is great. After all, we’re producing it! That’s exactly why it’s important to have an objective, data-based way to assess content (i.e., metrics). Just remember: Google’s new algorithm uses machine learning to detect “helpfulness” of content. So much content today is produced – and much without – so it will be interesting to see whether Google can truly distinguish between helpful and unhelpful content. So make sure you review all of the questions and guidance Google has shared around the Panda, Core, Product Review and helpful content updates. I’ve compiled this all for you in What is helpful content, according to Google. I’m solidly in the “unsure” camp right now. There are certainly a few sites I’ll be watching in the days following the rollout to see whether Google can detect the true depth and helpfulness of content. Will the helpful content update be as big as Panda, Penguin or Florida?We don’t know yet how big Google’s helpful content update will be. But we know it will have a “meaningful” impact when it hits. Marie Haynes shared some thoughts about what to expect earlier today, comparing it to Penguin in 2012. Our own Barry Schwartz has compared it to Panda from 2011. And Panda was probably the largest update following Florida in 2003, which was one of the earliest and most impactful changes to Google and SEO. But just as a quick reminder – or in case you weren’t working in SEO a decade or longer ago – those updates did a lot of damage. For those on the losing end, traffic vanished overnight. People lost their jobs. Businesses shut down. It was serious. It was estimated that Panda had a $1 billion impact. On the other side, it’s quite possible the helpful content update will be more like past pre-announced updates (think: Mobilegeddon, which was anything but apocalyptic for most websites, or the Page Experience Update, which got tons of hype but was basically an insignificant update). What to do now?For now, we wait. After the helpful content update launches, as with any Google algorithm update, the first rule is don’t panic. Google said it will take two weeks for the update to roll out completely. Make sure you collect enough data to know whether any changes to your traffic are just temporary or are sticking. You want to respond, not react, to an update. If you react too quickly, you can end up doing more damage, whereas if you collect and analyze your data, read and learn everything you can about the update, you can respond more deliberately and smartly. Were you already planning to improve or remove content from your site? If so, it’s likely because that content isn’t good or is outdated. So go on – update it or delete it. This update shouldn’t change your plans. Here are a couple of articles that may help you:

Remember, the helpful content update is a sitewide signal. That means if you have too much unhelpful content on part of your website, it could hurt your ability to rank – even if you have helpful content. This is where some people in the “My content is helpful” crowd need to be careful. Is all of your content helpful? Or just some of it? The post SEOs feel content ahead of Google helpful content update appeared first on Search Engine Land. via Search Engine Land https://searchengineland.com/seos-react-google-helpful-content-update-387415 Twitter announced that they’ve launched three new and improved measurement solutions to all advertisers globally. Improved Twitter pixelThe new Twitter pixel has additional functionality such as allowing advertisers to measure more actions, such as add-to-cart. Twitter has also simplified setup, troubleshooting, and the event creation process. They have also added updates to their Pixel Helper Chrome extension to help advertisers better understand the impact of their campaigns and provide support when seeing if the pixel is installed properly. Conversion APIThe Conversion API (CAPI) enables advertisers to connect to the API and send conversion events to Twitter without using third-party cookies. CAPI can also be used to improve and optimize ad targeting without the pixel. Multiple data signals including Twitter Click ID or email address can be used to send conversion events to the API endpoint and help advertisers understand the path users are taking as a result of their campaigns.

App Purchase OptimizationApp Purchase Optimization enables advertisers to deliver ads to people most likely to install an app or make a purchase by using machine learning to identify audiences that are most likely to take action. Early testing saw that 89% of advertisers saw a reduction in cost-per-purchase. App Purchase Optimization is now available on Android, with an iOS launch in the future.

Upcoming launches. Twitter also announced a few more launches coming in the near future. These include:

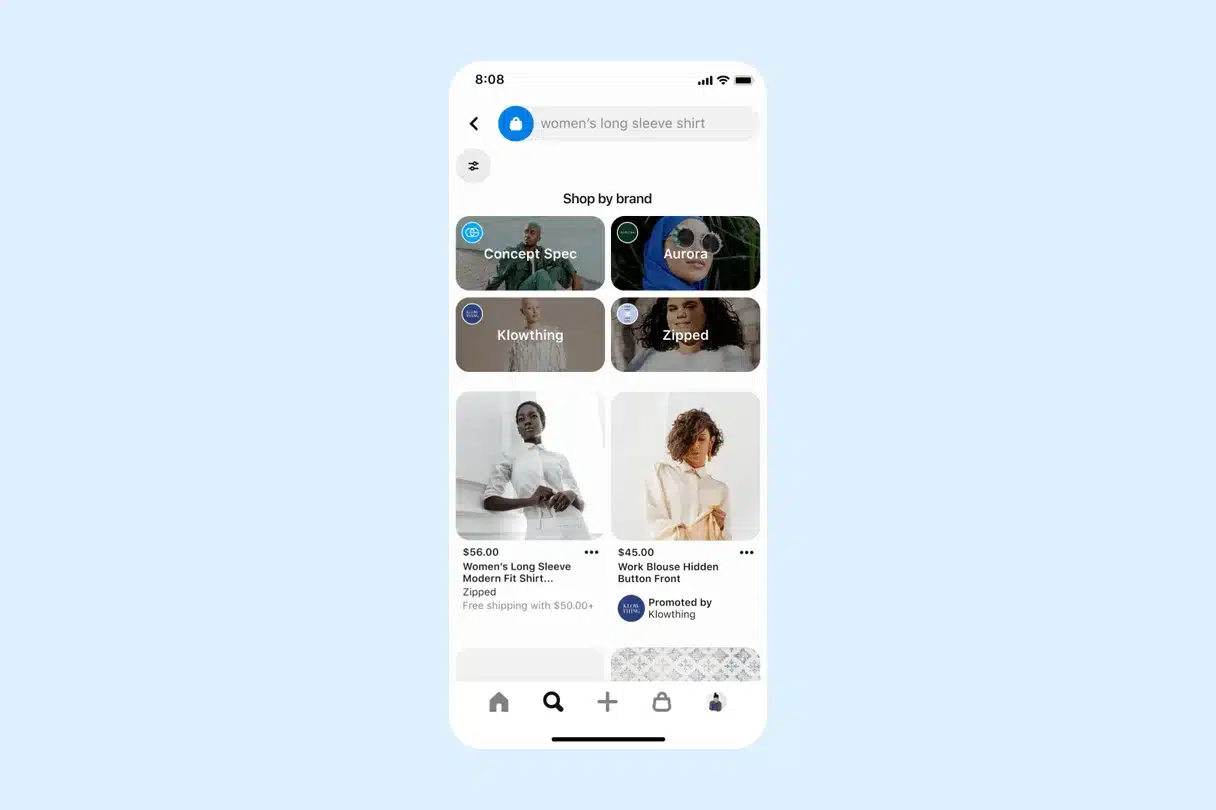

Why we care. These new updates should improve both audience and conversion measurements, giving advertisers a clearer picture of how their advertising is working for them. If you promote your products and/or services on Twitter, check out more information on how to take advantage of the new updates here. The post Twitter has launched new and improved Pixel, Conversion API, and App Purchase Optimizations appeared first on Search Engine Land. via Search Engine Land https://searchengineland.com/twitter-has-launched-new-and-improved-pixel-conversion-api-and-app-purchase-optimizations-387409 Pinterest just introduced an easier way for shoppers to check out on their platform. Hosted checkout is here. Hosted checkout is being launched first on Shopify and provides Pinterest merchants and advertisers an easier way for their shoppers to check out. The new feature should remove several steps from the current purchase process, which should increase conversions and decrease cart abandonments. Improving the current process. Previously, merchants and advertisers who used Pinterest to sell products would be required to tag products on their Pins. From there, users would be directed to the website where they would need to add the product to their cart and go through the checkout process. Now merchants who use the Shopify platform can promote and sell products without requiring that buyers ever leave their page. When a buyer finds a product, they simply enter payment and shipping details and the process is complete.

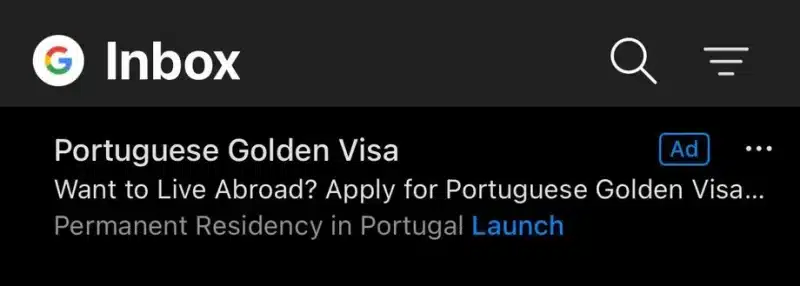

Early testing. Pinterest says that shoppers who used hosted checkout were more likely to make a purchase. They saw a “+3.9% lift in their purchase propensity and a +2.7% lift in checkouts per user compared to Pinners who did not encounter the hosted checkout experience.” For merchants, this means an increase in sales as well as boosted organic distribution in home feeds, search, and shopping surfaces. What Pinterest says. Hosted checkout is currently available to select US merchants who are in the Pinterest Verified Merchant Program and use Shopify to sell their products on Pinterest. Eligible merchants will see the hosted checkout section at the top of the shopping settings in the Shopify app. If you see the option, turn it on, and you’re ready to go. To learn more information about hosted checkout, you can read their announcement here. Why we care. Early tests look promising and any attempts to make the checkout process easier and faster for shoppers is a plus. Advertisers and merchants who use Pinterest to promote their products may see their sales increase when they use hosted checkout. To see if you’re eligible and to test the new experience, visit your Pinterest Merchant Center. The post Pinterest introduces hosted checkout for merchants appeared first on Search Engine Land. via Search Engine Land https://searchengineland.com/pinterest-introduced-hosted-checkout-for-merchants-387404 Microsoft has started putting ads in Outlook on iOS and Android and the response from users has been less than accepting. What’s the deal. In Outlook users have two options for organizing their inbox. You have a “focused” tab with important mail and an “other” tab with everything else. Previously Microsoft had only put the ads in the “other” tab for free subscribers, but now users are starting to see ads in the “focused” inbox as well.

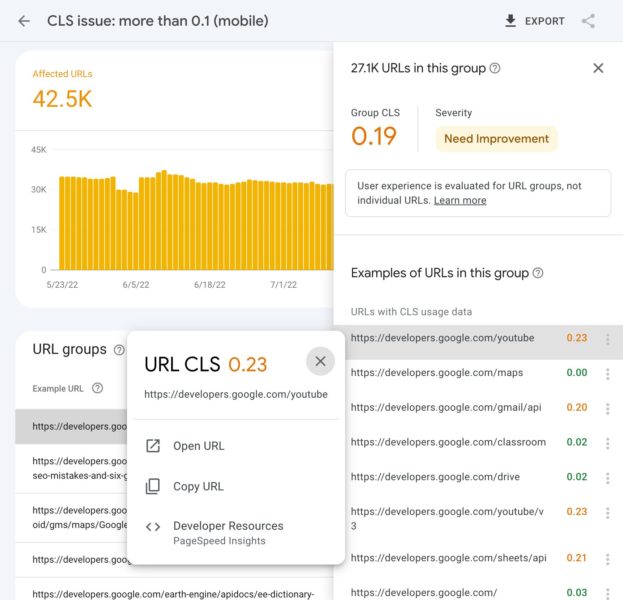

A negative response. Microsoft has been rolling out the new ads for a few months. Free users are finding it more difficult to avoid ads in Outlook mobile. However, Outlook mobile app users aren’t thrilled and many have been leaving one-star review complaints on Apple’s App Store. Seemingly the only way to avoid ads is to pay for a Microsoft 365 subscription. What Microsoft says. “For free users of Outlook, ads are shown in their inbox and they can choose to enable the ‘Focused inbox’ feature if they would like to see ads only in the ‘Other’ inbox,” says Microsoft spokesperson Caitlin Roulston in a statement to The Verge. Why we care. To the public, the ads may be a nuisance. This could be an attempt by Microsoft to gain more paying 365 customers. Or they’re finally caching up to Google who launched Gmail ads in 2013. If you’re an advertiser, this could be a great opportunity for you to capitalize on a less competitive placement. In 2019 we wrote up a guide to winning with Gmail ads. To our knowledge, Microsoft hasn’t released any best practices around email ads, but if they do we’ll be sure to update you. The post Microsoft is now putting ads in Outlook mobile appeared first on Search Engine Land. via Search Engine Land https://searchengineland.com/microsoft-is-now-putting-ads-in-outlook-mobile-387401 The Core Web Vitals report in Google Search Console is now showing URL-level data in the example URLs. Google also has made some changes to the text in the report to make it clearer. What it looks like. Google shared a screenshot via Twitter.

Support page updated. The Core Web Vitals report support page has also undergone some significant revisions. Why we care. These changes should make the Core Web Vitals report in Google Search Console more useful. Not being able to get detailed data in the URLs section has been a frustration for many SEOs. The post Google Search Console adds URL-level data in Core Web Vitals report appeared first on Search Engine Land. via Search Engine Land https://searchengineland.com/google-search-console-adds-url-level-data-in-core-web-vitals-report-387391 The new helpful content update sounds like a big deal. Google has given us a list of questions to consider to determine whether our sites are designed to help people, or rather, created to do well on search engines. If the latter is the case, you may find yourself hit with a sitewide signal that makes it difficult to rank. If this update has a strong impact, which I believe it will, we may be in for another shakeup in the world of SEO like we had following the launch of Penguin 10 years ago. If you have focused more on SEO efforts than creating content for humans to benefit from, this could strongly affect your site. It still remains to be seen how powerful this ranking signal is. Does it affect all sites that use SEO?Google was careful to note in their blog post that this update does not invalidate following SEO best practices. They say, “SEO is a helpful activity when it’s applied to people-first content” and link to their SEO starter guide. Google is not against search engine optimization. This update is geared toward sites that have gamed the system, creating content that isn’t super helpful to people but still ranking well because of SEO rather than on the merit of the content on the site. Is it a penalty?Google is careful in its wording regarding whether this is a penalty. It’s not a manual action. You won’t see it listed in Google Search Console. It’s not a spam action. We are to call it a “signal”. This is one of the many ranking signals Google describes in their documentation on How Search Works. If that signal is applied to your site, it likely will feel like a penalty. The good news is that you can get this classification removed from your site if you can improve your content. The part of Google’s algorithm that classifies sites for this update will be running continuously. If the algorithms see that your site’s content has shifted to be helpful to searchers, the strength of the signal may be reduced, or even lifted completely. This announcement reminds me of the early days of Google’s Penguin and Panda algorithms. Today these algorithms are baked into the core algorithm, but initially, they were filters that were applied to affected sites. Sites with unnatural links (Penguin) or low-quality content (Panda) would have a filter applied that suppressed ranking. If those sites cleaned up their link profile or improved the quality of their content then they had a chance at seeing recovery the next time Google ran a Penguin or Panda update.

It sounds like the helpful content classifier will have a similar effect in that sites affected will suffer some degree of sitewide ranking suppression and eventually can have that suppression lifted. But there are some significant differences:

What is people-first content?This is what Google wants us to focus on. But what is it? I’ll share my thoughts on each of the questions they say to ask ourselves about our content.

Something I’ve often said to clients when trying to explain content quality is, “Would this content still exist if it wasn’t for search engines?” A local business would still want to educate its customers on their services. The National Kidney Foundation would still publish content to educate patients and doctors. Would you still create the content you are creating if search hits from Google did not exist?

This should not be new to us! Google’s blog post on what site owners should know about core updates has a whole section of questions to ask ourselves in regards to expertise. Knowing your topic is important. I feel Google has started to promote first-hand expertise with recent product review updates. Many that were affected by the July 2022 product review update were sites that lacked legitimate first-hand expertise in using the products they were recommending. For the majority (if not all) of the sites I reviewed that were affected by this update, there was a sitewide demotion.

The search quality evaluator guidelines teach Google’s quality raters that it is important to determine a page’s purpose. Is it designed to share information? Or to sell products? Or perhaps to entertain? Why does your site exist? How are you trying to help people? It is important that the purpose of your content’s existence is clear.

I think that many people who have created sites that are created with SEO efforts foremost in mind will rationalize that their content is created to inform people. If you’re unsure, I encourage you to review the next two questions:

Google wants to present searchers with information that fully meets their needs. How do I know if my content is built for search engines first?Once again, Google has given us some questions to ask. When I read these, it feels to me that this update could have a large impact on what we see in the search results. If these questions apply to your content, you may find that your site is classified sitewide as being created primarily for search engines.

I expect some content may lie on a spectrum. Google says the signal is weighted and that “[s]ites with lots of unhelpful content may notice a stronger effect.” Super spammy sites with little actual benefit to searchers should be hit strongly. Sites with some content created primarily with SEO in mind that also have content that is helpful will be impacted, but not as strongly. It is important to remember that the sitewide effect means the good content on your site will also be affected by this update if Google deems you worthy of the classification.

Many of the sites affected by the July 2022 product reviews update were review sites that reviewed almost any product out there. There was little focus other than “we review products.” I expect we’ll see declines in many sites because they are writing on as many topics as they can rather than focusing on what is important to their users.

I wonder whether this line is geared toward sites writing their content mostly with AI content-generating tools. AI-generated content can often rank well because it contains many of the words search engines use to determine relevancy. But a person can usually tell when content is AI-written and not created by an actual human. If you are creating content by automated means, you may find yourself on Google’s radar.

This makes me think about review sites that aggregate Amazon listings and slightly re-word them. It will be interesting to see if other types of aggregator sites are hit as well.

I do think that it is still acceptable to write on trending topics provided they are what your audience wants to read. But if the focus of your site is simply to capitalize on new trends, I expect you may be affected.

The quality rater guidelines instruct the raters to determine to what extent content meets a searcher’s needs:

It is becoming increasingly more important to determine what your readers’ intent is when they come to your site. And are you fully satisfying their needs? Would they need to go elsewhere for more information after reading your content?

I have seen blog posts that advise that content below a certain word count will be considered thin by Google which is not the case. Sometimes short content actually helps the searcher more. I suppose this question is written to dissuade sites from writing massive articles covering everything there is to know about a topic on one single page (unless it is meeting the need of searchers who want to read a thorough essay on a subject). This may seem like it contradicts Google’s advice to fully meet the needs of a searcher. If tasked with creating content on buying a lawn mower, many SEOs are conditioned to produce the most thorough article on lawn mowers possible. Let’s say a searcher typed, “best lawn mower.” Do they really need an article that explains “What is a lawn mower?,” “Types of lawn mowers” and also “How to start a lawn mower”? Having those words on the page historically has helped search engines understand that the page is relevant to lawn mowers. However, the searcher’s intent, in this case, is to get help in deciding which mower to buy, not to learn everything there is to know on the subject. A shorter article may be what is more helpful to a searcher in this situation.

I’ve seen a real rise lately in discussions about operating “niche sites.” I do think some of these will survive, provided the writer really is passionate about the niche and can write on relevant topics from a point of personal experience. But if you’ve picked a niche primarily based on your ability to rank for that content, rather than out of a passion for covering that topic, you may find Google does not reward you.

This seems like a specific question and is pretty straightforward. Is recovery possible?If your site is classified as being built primarily for search engines, you will likely see a significant decline in search traffic over the next few months. Google says that sites that are affected will indeed be able to work to get the classifier removed and possibly recover their rankings. It is important to remember that if Google sees enough SEO content on your site, the sitewide signal will also impact the remainder of the content on your site as well. As such, you will need to identify where the problems are and work aggressively to repair them if you want to rank at all. Here is what I will be recommending to sites that come to us for help after being affected by this update although we’ll adapt our advice as more information becomes available:

ConclusionsPrior to the launch of this update, Google reached out to several SEOs, myself included, to discuss its release. They wanted to make it clear that this is not an attack on SEO. Good SEO can help people-first content perform even better. It sounds to me like there is the potential for this update to have a strong impact on many sites that have invested heavily in SEO. I am looking forward to participating in and watching the discussions on changes that the SEO community is seeing once this update is live. I hope you fare well! The post Google’s helpful content update: What should we expect? appeared first on Search Engine Land. via Search Engine Land https://searchengineland.com/google-helpful-content-update-what-to-expect-387328 The cookieless future is here (despite what Google says). If you haven’t found an identity solution, it’s time. We’re doing our part at Lotame, to ensure marketers, agencies and publishers are prepared and ready to conquer the cookieless future. With hundreds of campaigns launched across the globe, Lotame’s identity solution, Panorama ID, is proving that advertising on the open web is sustainable, profitable, and privacy safe. “We’ve done our due diligence and trust Lotame Panorama ID. The predictive cookieless solution is delivering fantastic results for our leading brand portfolio in terms of scale across the open web and cost-efficiency. We’re well-positioned to grab the cookieless future by the horns — in fact, we already are!” – Miles Pritchard, managing partner, OMD – EMEA. See the results for yourself in this collection of cookieless case studies. Access it now to see the success stories of marketers and agencies around the world including how:

Access the collection of Cookieless Case Studies Around the World now! The post Cookieless case studies: How brands are seeing a 9X lift in CTR appeared first on Search Engine Land. via Search Engine Land https://searchengineland.com/cookieless-case-studies-how-brands-are-seeing-a-9x-lift-in-ctr-387267 |

AuthorLet us market on the internet and earn some money. Archives

April 2023

|

RSS Feed

RSS Feed