|

Natural language processing opened the door for semantic search on Google. SEOs need to understand the switch to entity-based search because this is the future of Google search. In this article, we’ll dive deep into natural language processing and how Google uses it to interpret search queries and content, entity mining, and more. What is natural language processing?Natural language processing, or NLP, makes it possible to understand the meaning of words, sentences and texts to generate information, knowledge or new text. It consists of natural language understanding (NLU) – which allows semantic interpretation of text and natural language – and natural language generation (NLG). NLP can be used for:

The following are the core components of NLP:

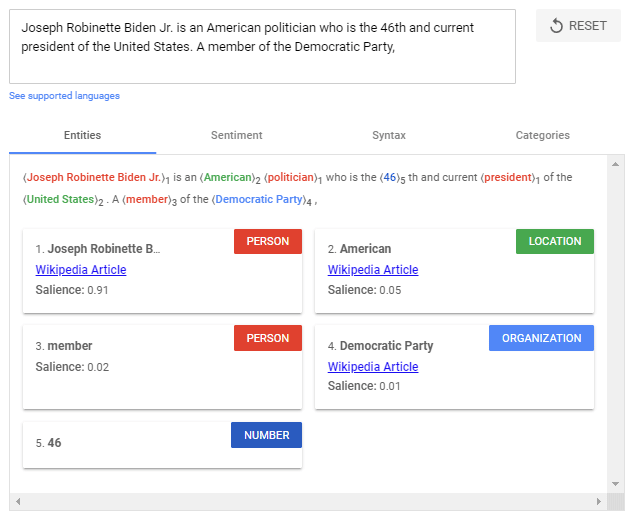

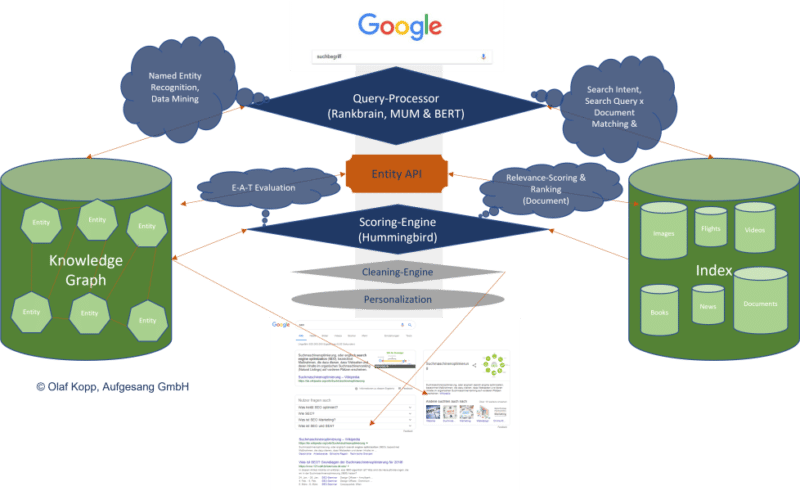

The use of NLP in searchFor years, Google has trained language models like BERT or MUM to interpret text, search queries, and even video and audio content. These models are fed via natural language processing. Google search mainly uses natural language processing in the following areas:

Google highlighted the importance of understanding natural language in search when they released the BERT update in October 2019.

BERT & MUM: NLP for interpreting search queries and documentsBERT is said to be the most critical advancement in Google search in several years after RankBrain. Based on NLP, the update was designed to improve search query interpretation and initially impacted 10% of all search queries. BERT plays a role not only in query interpretation but also in ranking and compiling featured snippets, as well as interpreting text questionnaires in documents.

The rollout of the MUM update was announced at Search On ’21. Also based on NLP, MUM is multilingual, answers complex search queries with multimodal data, and processes information from different media formats. In addition to text, MUM also understands images, video and audio files. MUM combines several technologies to make Google searches even more semantic and context-based to improve the user experience. With MUM, Google wants to answer complex search queries in different media formats to join the user along the customer journey. As used for BERT and MUM, NLP is an essential step to a better semantic understanding and a more user-centric search engine. Understanding search queries and content via entities marks the shift from “strings” to “things.” Google’s aim is to develop a semantic understanding of search queries and content. By identifying entities in search queries, the meaning and search intent becomes clearer. The individual words of a search term no longer stand alone but are considered in the context of the entire search query. The magic of interpreting search terms happens in query processing. The following steps are important here:

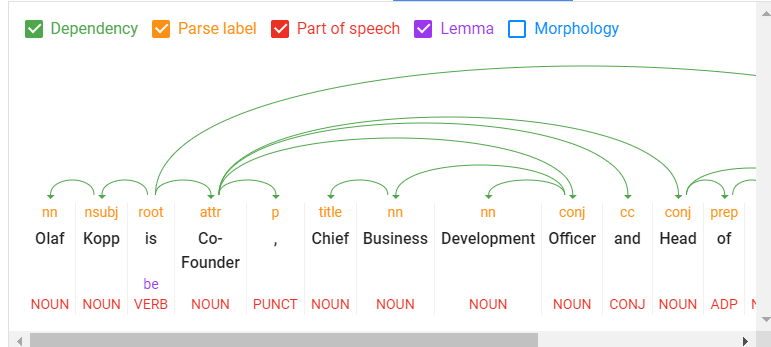

NLP is the most crucial methodology for entity miningNatural language processing will play the most important role for Google in identifying entities and their meanings, making it possible to extract knowledge from unstructured data. On this basis, relationships between entities and the Knowledge Graph can then be created. Speech tagging partially helps with this. Nouns are potential entities, and verbs often represent the relationship of the entities to each other. Adjectives describe the entity, and adverbs describe the relationship.

Google has so far only made minimal use of unstructured information to feed the Knowledge Graph. It can be assumed that:

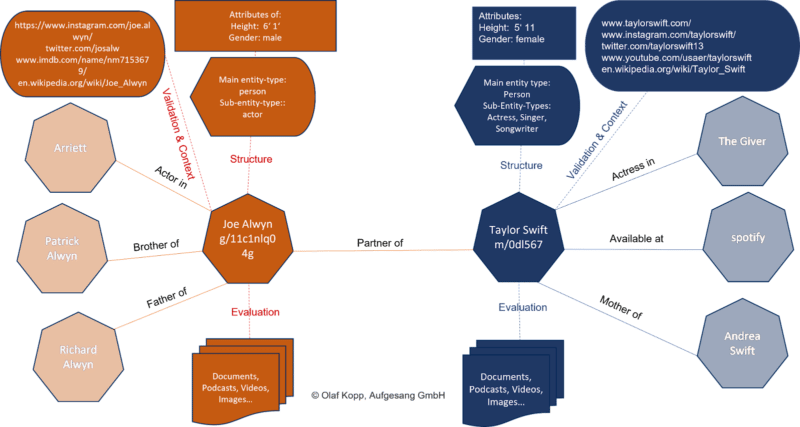

NLP plays a central role in feeding this knowledge repository. Google is already quite good in NLP but does not yet achieve satisfactory results in evaluating automatically extracted information regarding accuracy. Data mining for a knowledge database like the Knowledge Graph from unstructured data like websites is complex. In addition to the completeness of the information, correctness is essential. Nowadays, Google guarantees completeness at scale through NLP, but proving correctness and accuracy is difficult. This is probably why Google is still acting cautiously regarding the direct positioning of information on long-tail entities in the SERPs. Entity-based index vs. classic content-based indexThe introduction of the Hummingbird update paved the way for semantic search. It also brought the Knowledge Graph – and thus, entities – into focus. The Knowledge Graph is Google’s entity index. All attributes, documents and digital images such as profiles and domains are organized around the entity in an entity-based index.

The Knowledge Graph is currently used parallel to the classic Google Index for ranking. Suppose Google recognizes in the search query that it is about an entity recorded in the Knowledge Graph. In that case, the information in both indexes is accessed, with the entity being the focus and all information and documents related to the entity also taken into account. An interface or API is required between the classic Google Index and the Knowledge Graph, or another type of knowledge repository, to exchange information between the two indices. This entity-content interface is about finding out:

It could look like this:

We’re just starting to feel the impact of entity-based search in the SERPs as Google is slow to understand the meaning of individual entities. Entities are understood top-down by social relevance. The most relevant ones are recorded in Wikidata and Wikipedia, respectively. The big task will be to identify and verify long-tail entities. It is also unclear which criteria Google checks for including an entity in the Knowledge Graph. In a German Webmaster Hangout in January 2019, Google’s John Mueller said they were working on a more straightforward way to create entities for everyone.

NLP plays a vital role in scaling up this challenge. Examples from the diffbot demo show how well NLP can be used for entity mining and constructing a Knowledge Graph.

NLP in Google search is here to stayRankBrain was introduced to interpret search queries and terms via vector space analysis that had not previously been used in this way. BERT and MUM use natural language processing to interpret search queries and documents. In addition to the interpretation of search queries and content, MUM and BERT opened the door to allow a knowledge database such as the Knowledge Graph to grow at scale, thus advancing semantic search at Google. The developments in Google Search through the core updates are also closely related to MUM and BERT, and ultimately, NLP and semantic search. In the future, we will see more and more entity-based Google search results replacing classic phrase-based indexing and ranking. The post How Google uses NLP to better understand search queries, content appeared first on Search Engine Land. via Search Engine Land https://searchengineland.com/how-google-uses-nlp-to-better-understand-search-queries-content-387340

0 Comments

Leave a Reply. |

AuthorLet us market on the internet and earn some money. Archives

April 2023

|

RSS Feed

RSS Feed